A comprehensive examination of capability, adoption, ecosystem, and what “winning” actually means

The artificial intelligence race of 2026 is not a sprint. It is a multidimensional chess match — played simultaneously across research labs, boardrooms, developer communities, and government corridors — with no single winner, no finish line, and stakes that grow more consequential with every passing quarter.

To understand who is ahead, you first need to abandon the comfortable simplicity of league tables. The question “who is winning AI?” is a bit like asking who is winning civilization. It depends entirely on what you mean by winning, who you are asking, and what you plan to do with the answer. But the question still matters — because the labs leading today will shape how AI is used, governed, and distributed for the next decade. And that, quite plainly, changes everything.

This essay attempts something honest and difficult: a clear-eyed snapshot of the major players competing for AI leadership in 2026, what each does better than the rest, and what the competition itself reveals about the future of intelligence as infrastructure.

Who Is Leading Right Now? A 2026 Snapshot

OpenAI

CONSUMER ADOPTIONChatGPT remains the world’s most-used AI product with strong brand recognition and user habits.

Google DeepMind

RESEARCH AT SCALENo lab matches Google’s combination of research output, compute infrastructure, and distribution.

Anthropic

SAFETY + ENTERPRISEClaude is the model of choice for organizations where reliability and safety matter most.

Meta AI

OPEN-SOURCE ECOSYSTEMLLaMA’s ecosystem is the largest in the open AI world, powering community-driven innovation.

What Does “Winning the AI Race” Really Mean?

Model Intelligence vs. Real-World Impact

The popular shorthand for AI leadership is benchmark performance. Which model scores highest on MMLU, HumanEval, MATH, or the latest reasoning gauntlet? These leaderboards generate enormous attention — and enormous distortion. A model can top every academic benchmark while failing to write a coherent sales email or debug a real codebase under production constraints. Benchmarks measure what labs optimize for, not necessarily what users need.

Real-world impact is messier and more revealing. It shows up in how many developers are building with a model’s API, how often enterprise customers renew their contracts, how reliably a system handles edge cases without hallucinating, and whether the product is fast enough to feel alive. The labs that have learned to close the gap between benchmark brilliance and practical dependability are the ones genuinely advancing the field.

Ecosystem Power

No AI model exists in isolation. The models that matter are embedded in ecosystems — networks of APIs, integrations, plugins, partner platforms, and developer communities that multiply a model’s reach far beyond what its raw intelligence could achieve alone. ChatGPT’s dominance in consumer AI is not purely a function of GPT-4’s capabilities; it is a function of being first, of building deep platform integrations, and of the flywheel effect that comes from hundreds of millions of users generating feedback and use cases at scale.

Ecosystem power is the moat that keeps a lab relevant even when a competitor releases a technically superior model. It is also why open-source matters so profoundly — because when developers can download, modify, and deploy a model themselves, the ecosystem grows in directions no single company could have planned.

Speed of Innovation

In a field where a breakthrough paper can reshape competitive dynamics in weeks, iteration speed has become a strategic asset in its own right. The labs releasing new models and capabilities fastest are setting the pace for everyone else — forcing competitors to respond, adapt, or fall behind. But speed has a shadow: models shipped fast can be brittle, misaligned, or dangerous. The labs navigating this tension — innovating rapidly while maintaining quality and safety — are demonstrating the most sophisticated form of leadership.

Accessibility and Reach

One of the defining fault lines of 2026 is the question of openness. Should powerful AI models be open-sourced, available to anyone with a laptop and a reason? Or should they be controlled, access-gated products, subject to safety filters and usage policies? This is not merely a philosophical debate — it has concrete consequences for who gets to use AI, how it gets adapted for local needs, and whether power over the technology concentrates in a handful of American companies or distributes more widely across the globe.

OpenAI: The Productization Pioneer

OpenAI’s greatest achievement is not GPT-4, or even GPT-4o. It is ChatGPT — a consumer interface so frictionless and well-designed that it introduced hundreds of millions of people to conversational AI in a matter of months. That kind of product-market fit is extraordinarily rare, and it has given OpenAI a gravitational advantage that no competitor has yet fully overcome.

Through its deep partnership with Microsoft, OpenAI’s models are embedded in Copilot for Office, GitHub Copilot, Azure OpenAI Service, and a growing number of enterprise software tools. This distribution advantage means OpenAI is often the default choice for enterprises evaluating AI — not because it is always the best model for every task, but because it is already there, already integrated, already trusted by IT departments that have spent careers standardizing on Microsoft infrastructure.

OpenAI’s weakness, paradoxically, is its own ambition. The pressure to ship faster, to monetize more aggressively, and to keep pace with well-funded competitors has occasionally led to products that felt rushed — early ChatGPT plugins, uneven GPT Store quality, and the turbulence of its own leadership drama all remind observers that speed and stability are not always compatible. But OpenAI’s ability to recover, iterate, and maintain its position through adversity is itself a form of competitive strength.

“OpenAI’s greatest achievement is not GPT-4 — it is ChatGPT: a consumer interface so frictionless that it introduced hundreds of millions of people to conversational AI in a matter of months.”

Google DeepMind: The Research Colossus

No organization on earth has invested more deeply in AI research over a longer period than Google. DeepMind — now merged with Google Brain into a unified Google DeepMind — combines decades of foundational research (AlphaGo, AlphaFold, the original transformer paper) with Google’s extraordinary infrastructure advantages: custom TPU chips, petabytes of proprietary training data, and global compute capacity that no startup or university can replicate.

The Gemini model family represents Google’s answer to GPT-4 and Claude, and in its latest iterations, it is genuinely competitive — especially in multimodal tasks where text, images, audio, and video must be understood together. Google’s integration strategy is also formidable: Gemini is being woven into Google Search, Google Workspace, Android, and Chrome, which means it will reach more people through passive daily use than any product requiring deliberate adoption could ever match.

Google’s challenge is not capability — it is coherence. A company of Google’s size moves slowly at the product level even when its research moves quickly. The AI product experiences it ships have sometimes felt fragmented or cautious, shaped by the fear of cannibalizing its own search advertising business. The tension between Google’s extraordinary AI capability and its organizational complexity remains one of the most fascinating strategic puzzles in technology.

Anthropic: The Safety-First Contender

Anthropic was founded on a specific thesis: that the most important problem in AI is not capability, but alignment — making sure that increasingly powerful AI systems do what humans actually intend, and do not cause harm through misuse, miscalibration, or emergent behaviors their creators did not anticipate. This is not a marketing position. It is reflected in how Claude models are trained, evaluated, and deployed.

The Claude 3 and Claude 4 series have earned genuine respect — particularly in enterprise contexts where reliability, nuance, and the ability to handle complex, sensitive tasks without catastrophic failure matter more than raw benchmark scores. Anthropic’s Constitutional AI approach, its investment in interpretability research, and its willingness to publish safety findings even when those findings are uncomfortable have built a kind of credibility that is difficult to manufacture and easy to lose.

Where Anthropic leads is also where it faces its greatest test: can safety-first AI be commercially competitive in a market that rewards speed and spectacle? The evidence so far is encouraging. Enterprise clients willing to pay for dependable, well-aligned AI have proven to be a substantial and growing market — and Anthropic’s investment from Amazon has provided the compute resources to compete at scale.

Meta AI: The Open-Source Revolution

Meta’s decision to open-source its LLaMA model family was, in retrospect, one of the most consequential strategic choices in the AI industry’s recent history. It was not altruism — it was strategy. By releasing powerful models to the developer community, Meta seeded an ecosystem of fine-tuned variants, specialized applications, and enthusiastic contributors who collectively multiplied the value and reach of Meta’s base research far beyond what any closed commercial product could have achieved.

LLaMA 3 and its successors have become the foundation of an enormous open-source AI ecosystem. Developers building local AI applications, researchers experimenting with novel training approaches, companies in regions with privacy regulations that prevent cloud-based AI, and governments wary of dependence on American AI infrastructure have all found in LLaMA a capable, customizable, and cost-effective foundation. This is a form of influence that does not show up in revenue figures — but it shapes the long-term distribution of power over AI technology in ways that matter enormously.

Emerging Challengers Worth Watching

The competitive landscape extends well beyond the four headline players. Elon Musk’s xAI and its Grok models have carved a niche through direct integration with the X platform and an intentionally less restricted personality. France’s Mistral AI has become a European champion of efficient, high-quality models — demonstrating that frontier AI does not require frontier-scale capital. Specialized labs focused on coding, biology, legal AI, and enterprise automation are also quietly building durable positions in specific domains where generalist models remain imperfect.

China’s AI development — from Baidu’s ERNIE, to Alibaba’s Qwen, to a growing cohort of well-funded startups — represents a parallel ecosystem that Western analysis consistently underestimates. The global AI race is not merely an American contest.

Head-to-Head: Where Each Lab Leads

| Dimension | Open AI | Google DeepMind | Anthropic | Meta AI |

|---|---|---|---|---|

| Reasoning & Coding | Strong | Strong | Strong | Competitive |

| Multimodal AI | Excellent | Best-in-class | Growing | Growing |

| Consumer Reach | Dominant | Massive | Growing | Massive (social) |

| Enterprise Trust | Strong | Fragmented | Leading | Limited |

| Developer Ecosystem | Largest closed | Solid | Growing fast | Largest open |

| Safety & Alignment | Improving | Serious investment | Industry-leading | Less prioritized |

| Open-Source Commitment | Minimal | Selective | Closed | Core strategy |

| Infrastructure Advantage | Microsoft/Azure | Google Cloud / TPUs | AWS (Amazon deal) | Self-owned at scale |

Key Trends Defining the Race

Rise of Multimodal AI. The era of text-only language models is ending. The frontier is now models that see, hear, speak, and reason across modalities simultaneously — turning AI from a reading assistant into something closer to a perception system. Google DeepMind leads here, though OpenAI and others are closing the gap rapidly.

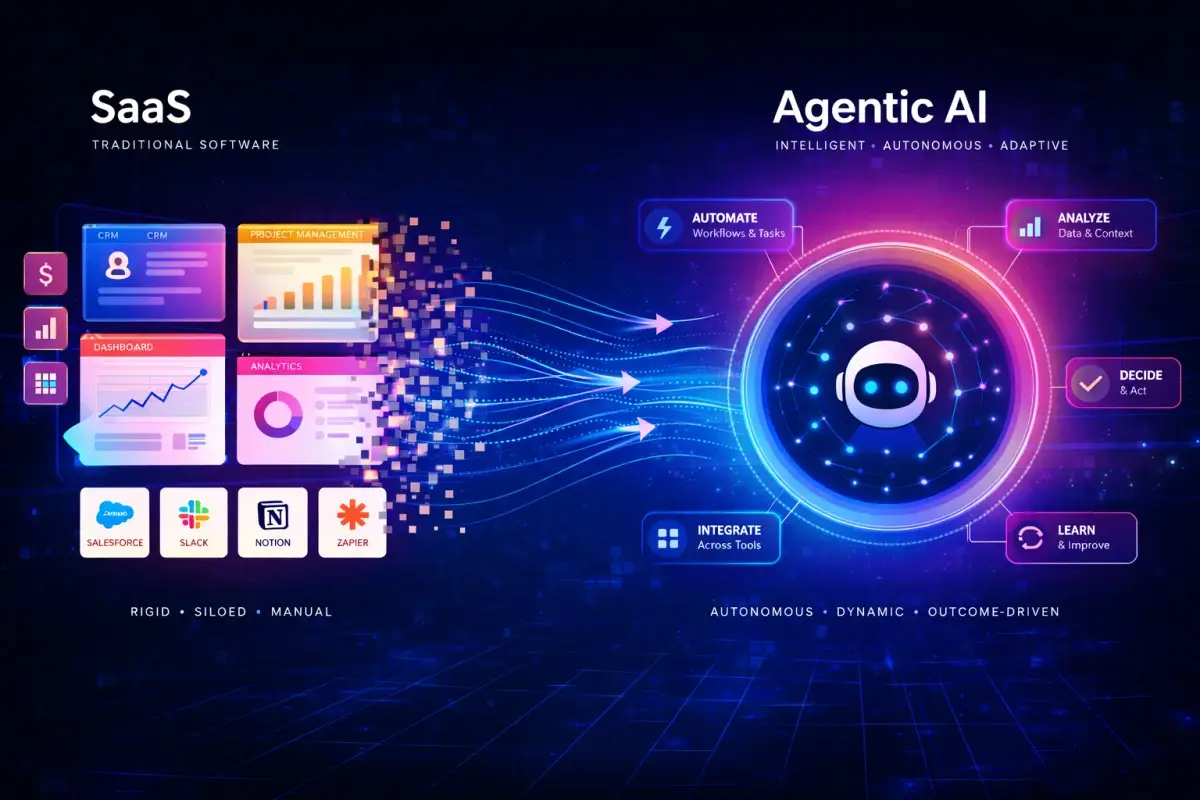

AI Agents and Autonomous Systems. The shift from responding to acting is the most consequential trend of 2026. AI agents — systems that can browse the web, write and execute code, manage files, and coordinate with other AI — are moving from research demos into production. Every major lab is racing to build reliable agentic infrastructure, with OpenAI’s operator framework and Anthropic’s computer use capabilities leading early deployments.

The Open vs. Proprietary Battle. Meta’s open-source strategy has permanently changed the competitive landscape. The question now is not whether open models can match proprietary ones on capability — they largely can, in many domains — but whether the fragmentation of open ecosystems creates safety risks that centralized, controlled development would prevent.

Trend 04

Hardware and Infrastructure as Moat. The AI race is increasingly a race for silicon. Custom chips from Google (TPUs), emerging silicon from Amazon (Trainium), and the ongoing dominance of NVIDIA’s GPUs mean that labs with deep hardware relationships have structural training advantages that pure software innovation cannot overcome. Microsoft’s investment in custom AI hardware for OpenAI reflects this reality.

Real-World Impact: Where Each Lab Dominates

Consumer Tools

In the space of chatbots, writing assistants, and creative AI tools, OpenAI’s ChatGPT holds first-mover dominance. But Gemini’s embedding in Android and Google Search gives it enormous passive reach — billions of users will encounter Google’s AI whether they seek it out or not. Claude’s consumer interface, while smaller, attracts users who specifically want a more thoughtful, nuanced AI experience. Meta’s AI assistant, embedded across WhatsApp, Instagram, and Facebook, reaches a population that may never use a dedicated AI product — and that demographic reach matters more than most AI observers acknowledge.

Enterprise AI

Enterprise AI adoption is where the most significant money is moving in 2026. Here, trust, compliance, security, and integration reliability matter far more than benchmark scores. Anthropic has built remarkable traction in regulated industries. OpenAI, through Microsoft’s enterprise sales force, has penetrated large organizations at scale. Google is leveraging existing Workspace relationships to land Gemini. The enterprise race is long and relationship-driven, and no single player has won it.

Developer Ecosystem

For developers building AI-powered applications, the choice of foundation model is increasingly pragmatic. OpenAI’s API remains the default starting point for many projects — its documentation is excellent, its reliability has improved, and its community of tutorials, wrappers, and integrations is vast. Anthropic’s API is the preferred choice for applications requiring nuanced instruction-following and safety. Meta’s LLaMA is the foundation for anyone who wants to run AI locally, fine-tune aggressively, or avoid API costs entirely. All three are legitimate choices, which is itself a sign of a maturing market.

Conclusion: No Single Winner — And That’s the Point

The search for a single winner of the AI intelligence race is a category error. OpenAI leads in consumer adoption. Google DeepMind leads in research depth and infrastructure scale. Anthropic leads in safety credibility and enterprise trust. Meta leads in open-source reach and community momentum. Each of these forms of leadership is real, consequential, and unlikely to collapse in the near term.

The race is accelerating, not approaching a finish line. The capabilities of the best models available in April 2026 will be superseded by the end of the year. The strategic positions labs hold today will shift as regulation matures, as compute economics evolve, and as the applications of AI expand into domains — healthcare, scientific research, education, government — where the stakes are orders of magnitude higher than writing assistance or code completion.

The deepest insight, perhaps, is this: the real winner of the AI race may not be any single lab, but the ecosystem itself — the expanding network of developers, researchers, users, and institutions who are learning to work with AI, building the norms and tools and institutional knowledge that will determine whether this technology amplifies human potential or distorts it. The labs are building the engines. The rest of us are deciding where to drive.